I once watched a clean-looking deploy drop a handful of checkout requests because the Node.js process treated SIGTERM like an interruption instead of a contract. Docker sent the signal, Kubernetes waited, the process exited, and the logs looked polite. The customers saw something less polite: requests that started, reached the database, and never got a response.

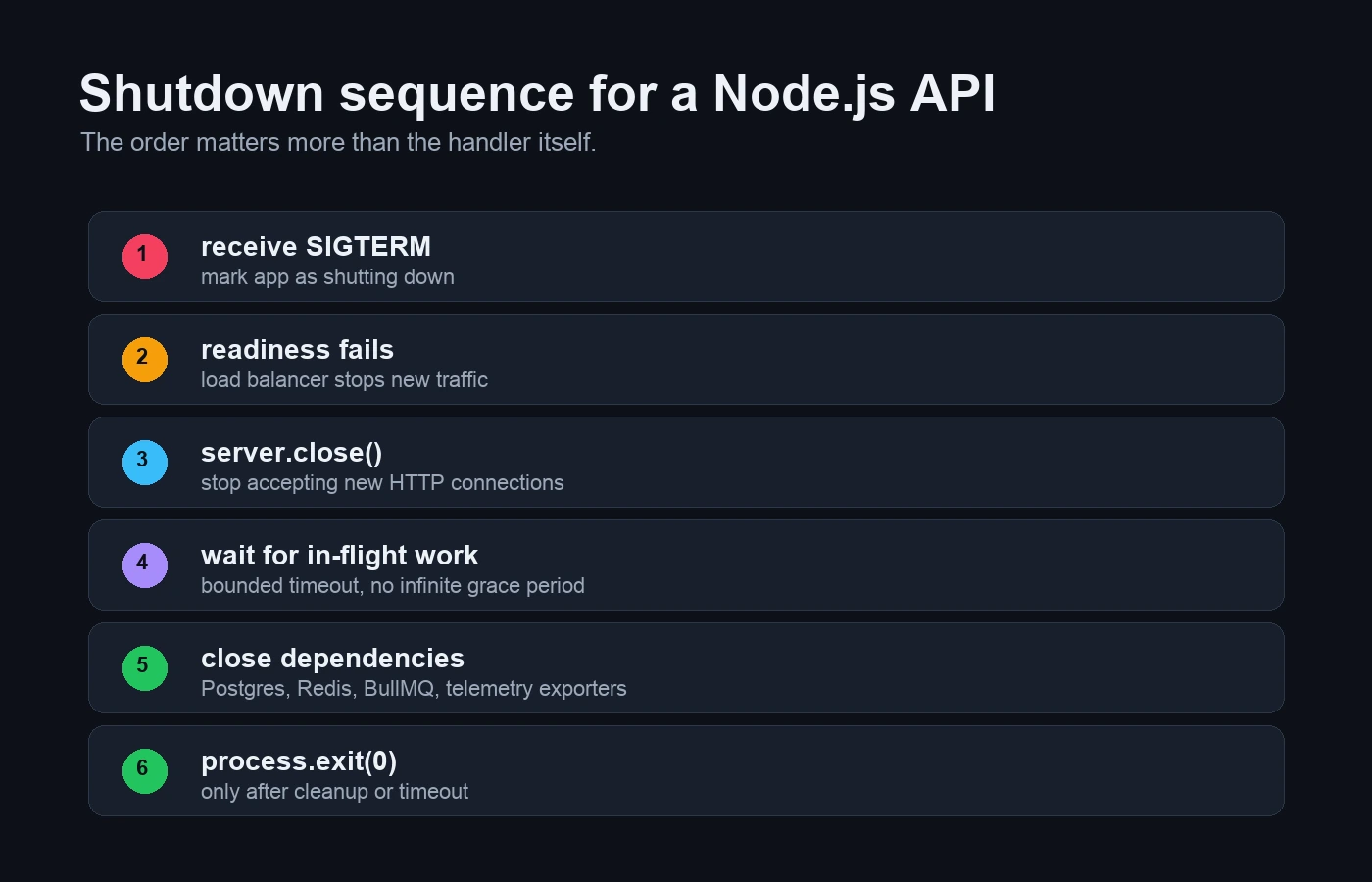

Graceful shutdown in Node.js is not a clever signal handler. It is an order of operations: stop receiving new traffic, drain in-flight work, close dependencies, flush telemetry, and exit before the platform gets impatient. If you run Express behind Docker, Kubernetes, PM2, systemd, or a VPS process manager, this belongs in the first production pass, not the postmortem.

The shutdown order that stops dropped requests

The sequence is boring on purpose. Boring shutdowns are the ones that do not turn a deploy into a partial outage.

- Receive

SIGTERMorSIGINT. - Mark the process as shutting down so readiness fails.

- Stop accepting new HTTP connections with

server.close(). - Let in-flight requests finish, but only inside a fixed deadline.

- Close database pools, Redis clients, queue workers, and exporters.

- Exit cleanly, or force exit when the deadline is reached.

The Node.js process signal docs are the base layer here: the process can listen for signals such as SIGTERM and SIGINT. The HTTP layer is separate. The HTTP server.close() docs matter because closing the server stops new connections but does not magically finish every side effect your app started.

The signal handler that should not exit immediately

This is the shape I start with in an Express API. It keeps state in one place, separates readiness from liveness, and avoids doing real cleanup twice.

import http from "node:http";

import process from "node:process";

import type { Express } from "express";

type ShutdownDependency = {

name: string;

close: () => Promise<void>;

};

export function installGracefulShutdown(options: {

app: Express;

server: http.Server;

dependencies: ShutdownDependency[];

timeoutMs?: number;

}) {

const timeoutMs = options.timeoutMs ?? 25_000;

let shuttingDown = false;

options.app.get("/livez", (_req, res) => {

res.status(200).json({ ok: true });

});

options.app.get("/readyz", (_req, res) => {

if (shuttingDown) {

res.status(503).json({ ok: false, reason: "shutting_down" });

return;

}

res.status(200).json({ ok: true });

});

async function shutdown(signal: NodeJS.Signals) {

if (shuttingDown) return;

shuttingDown = true;

console.info({ signal }, "shutdown started");

const deadline = setTimeout(() => {

console.error({ timeoutMs }, "shutdown timed out");

process.exit(1);

}, timeoutMs);

deadline.unref();

options.server.close(async (err) => {

if (err) {

console.error({ err }, "http server close failed");

}

for (const dep of options.dependencies) {

try {

await dep.close();

console.info({ dependency: dep.name }, "dependency closed");

} catch (closeErr) {

console.error({ err: closeErr, dependency: dep.name }, "dependency close failed");

}

}

clearTimeout(deadline);

console.info("shutdown finished");

process.exit(err ? 1 : 0);

});

options.server.closeIdleConnections?.();

}

process.once("SIGTERM", shutdown);

process.once("SIGINT", shutdown);

}That is not the whole story, but it is a real starting point. The key detail is readiness: once shuttingDown flips to true, a load balancer or Kubernetes readiness probe can stop sending fresh traffic while the process drains what it already has. On modern Node, server.close() handles more idle-connection cleanup than older releases, but I still call closeIdleConnections() as a harmless guard when the method exists.

Where Express needs to stop accepting traffic

import express from "express";

import { createServer } from "node:http";

import { pool } from "./db";

import { redis } from "./redis";

import { worker } from "./worker";

import { installGracefulShutdown } from "./shutdown";

const app = express();

app.get("/orders/:id", async (req, res) => {

// Real route code here.

res.json({ id: req.params.id });

});

const server = createServer(app);

server.listen(3000, () => {

console.log("api listening on :3000");

});

installGracefulShutdown({

app,

server,

timeoutMs: 25_000,

dependencies: [

{ name: "bullmq-worker", close: () => worker.close() },

{ name: "redis", close: () => redis.quit() },

{ name: "postgres", close: () => pool.end() },

],

});If the API uses Prisma, the Postgres close step is prisma.$disconnect(). If it uses pg.Pool, it is pool.end(). If it runs BullMQ workers, close the worker so it stops taking new jobs. The queue details are in the BullMQ background jobs guide; the connection budget is in the Postgres pooling guide.

Why keep-alive sockets outlive your grace period

One shutdown trap is HTTP keep-alive. A process can stop accepting new connections while existing sockets still sit around longer than your platform grace period. Newer Node versions have improved behavior around idle connections, and the HTTP server API also exposes connection-closing tools such as closeIdleConnections() and closeAllConnections(). I still do not start there. First I set sane server timeouts and test the normal drain path.

server.keepAliveTimeout = 5_000;

server.headersTimeout = 6_000;

server.requestTimeout = 30_000;If your service has long uploads, exports, or streaming responses, be more careful. For upload-heavy APIs, pair this with the S3 upload and streaming draft. A shutdown timeout that is perfect for JSON APIs can be hostile to a route that streams a large file.

Docker will kill the process if cleanup takes too long

Docker does not ask forever. The Docker stop flow sends a termination signal and then, after a timeout, sends a kill signal. Linux containers already default to SIGTERM, so STOPSIGNAL SIGTERM is mostly documentation unless your image or process manager changed it. The Docker stop docs are explicit about that two-step behavior. If your app needs 20 seconds to drain but the platform gives it 10, the platform wins.

# Dockerfile

STOPSIGNAL SIGTERM# docker-compose.yml

services:

api:

build: .

stop_grace_period: 30sThat does not mean every app gets a huge grace period. A long grace period hides bad cleanup and slows deploys. I usually start with 25-30 seconds for normal APIs, then measure. If a route needs longer, I ask whether it should be a background job instead of a request. The Dockerizing Node.js guide covers the image side; graceful shutdown is the runtime contract.

Kubernetes can still route traffic while shutdown propagates

Kubernetes adds one more moving part: traffic routing. The pod termination docs describe the grace period, and the probe docs explain readiness, liveness, and startup probes. For Node APIs, the important split is simple:

- Liveness: is the process alive enough that Kubernetes should not restart it?

- Readiness: should this pod receive traffic right now?

- Startup: does this slow-starting process need extra time before liveness begins?

terminationGracePeriodSeconds: 30

containers:

- name: api

image: nodewire-api:latest

ports:

- containerPort: 3000

readinessProbe:

httpGet:

path: /readyz

port: 3000

periodSeconds: 5

failureThreshold: 1

livenessProbe:

httpGet:

path: /livez

port: 3000

periodSeconds: 10

failureThreshold: 3Do not make liveness depend on Postgres. A temporary database blip should not necessarily restart every API pod at once. Readiness can be stricter because it decides traffic, not process survival. The dedicated health-check article in this series will go deeper, but the rule here is enough: shutdown should make readiness fail before the process exits, shortening the window where traffic can still arrive while EndpointSlice and load-balancer state catches up.

Background workers keep taking jobs unless you stop them

HTTP is only half the app if a worker sits beside it. A BullMQ worker should stop claiming new jobs and finish or release the current job according to your retry policy. A graceful API shutdown that forgets the worker can still duplicate work, lose lock renewal, or leave a job in a confusing state.

I prefer separate processes for API and worker roles. That lets each process own a simpler shutdown path: the API drains requests; the worker stops taking jobs and closes Redis. If both roles run in one process, the shutdown function must close both. This is where the Node cron jobs guide and the BullMQ guide connect to deploy safety.

Telemetry should not hold shutdown hostage

Observability tools are useful during shutdown because they show whether deploys are clean. They should not hold the process hostage. If the app uses OpenTelemetry, flush spans with a timeout. If it uses Sentry, close the client with a bounded wait. The OpenTelemetry draft in this batch covers traces; the shutdown-specific rule is smaller: export what you can, then let the platform finish the lifecycle.

The shutdown test that catches fake graceful handlers

I do not trust graceful shutdown until I have interrupted a real request. My local smoke test is crude and useful:

npm run build

node dist/server.js &

PID=$!

curl http://localhost:3000/slow-route &

sleep 1

kill -TERM "$PID"

wait "$PID"The expected behavior is not just “the process exited.” I want to see readiness fail, no new requests accepted, the slow request either finish or receive a controlled error, dependency close logs, and an exit code that matches the outcome. In CI, I turn that into an integration test for the shutdown module and a deploy smoke test in the GitHub Actions pipeline.

The mistakes that turn shutdown into a polite crash

- Calling

process.exit()immediately. That skips the drain and turns graceful shutdown into a decorative log line. - Keeping readiness green during shutdown. The load balancer keeps sending new traffic to a process that is trying to leave.

- No hard timeout. One stuck request can hold a deploy forever until the platform kills it anyway.

- Closing the database before HTTP drains. In-flight handlers fail because their pool vanished mid-request.

- Restarting every pod when Postgres is slow. Liveness should not be a dependency panic button.

The checklist I run before trusting a deploy

- Handle

SIGTERMandSIGINTonce. - Expose separate readiness and liveness routes.

- Flip readiness to false immediately on shutdown.

- Call

server.close()before closing dependencies. - Set a shutdown deadline shorter than the platform grace period.

- Close Postgres/Prisma, Redis, queues, and telemetry with bounded waits.

- Test shutdown during an active request.

- Log the signal, deadline, dependency close results, and final exit path.

FAQ

What signal should a Node.js app handle for graceful shutdown?

Handle SIGTERM for Docker, Kubernetes, and most process managers. Handle SIGINT as well for local Ctrl+C behavior. Keep the shutdown function idempotent so the second signal does not run cleanup twice.

Does server.close() finish all active requests?

It stops the server from accepting new connections and invokes its callback after existing connections close. You still need a deadline, dependency cleanup, and a strategy for long-lived sockets or streams.

Should Kubernetes liveness check the database?

Usually no. If the database is temporarily unavailable, restarting every API pod can make the incident worse. Use readiness for dependency availability and liveness for process health.

How long should shutdown timeout be?

For normal JSON APIs, I usually start around 25-30 seconds and measure. The application timeout should be shorter than the Docker or Kubernetes grace period, leaving a little space for final logs and forced cleanup.

What about WebSockets?

WebSockets need a separate drain plan: stop accepting upgrades, notify clients, close sockets after a deadline, and make reconnect behavior explicit. The Socket.io guide is the right companion for that path.

When graceful shutdown is the wrong tool

I do not use graceful shutdown to hide slow routes, broken idempotency, or jobs that should have been moved out of the request path. If a request normally takes 45 seconds, a 30-second shutdown window will not save it. That work belongs in a queue, a resumable workflow, or a separate worker.

For normal APIs, though, I treat graceful shutdown as table stakes. If a Node.js service cannot deploy without dropping ordinary requests, I do not care how clean the Dockerfile looks. The app is not production-shaped yet.