I ran Express vs Fastify on the same DigitalOcean droplet last month — 2 vCPUs, 4 GB RAM, Node 24 LTS, both serving the same JSON endpoint behind no proxy. Fastify hit 114,000 req/s. Express stalled at 21,000. That gap looks devastating in a benchmark and turns out to mean almost nothing for most apps. The interesting question is which one to actually pick in 2026, and when the boring choice is still the right choice.

I have shipped both in production: an Express monolith that happily served a logistics SaaS at 600 req/s for three years, and a Fastify rewrite of a fintech card-processing API that needed every microsecond it could find. The decision matrix below is the one I now use with paying clients. Skip to the table if you just want the verdict.

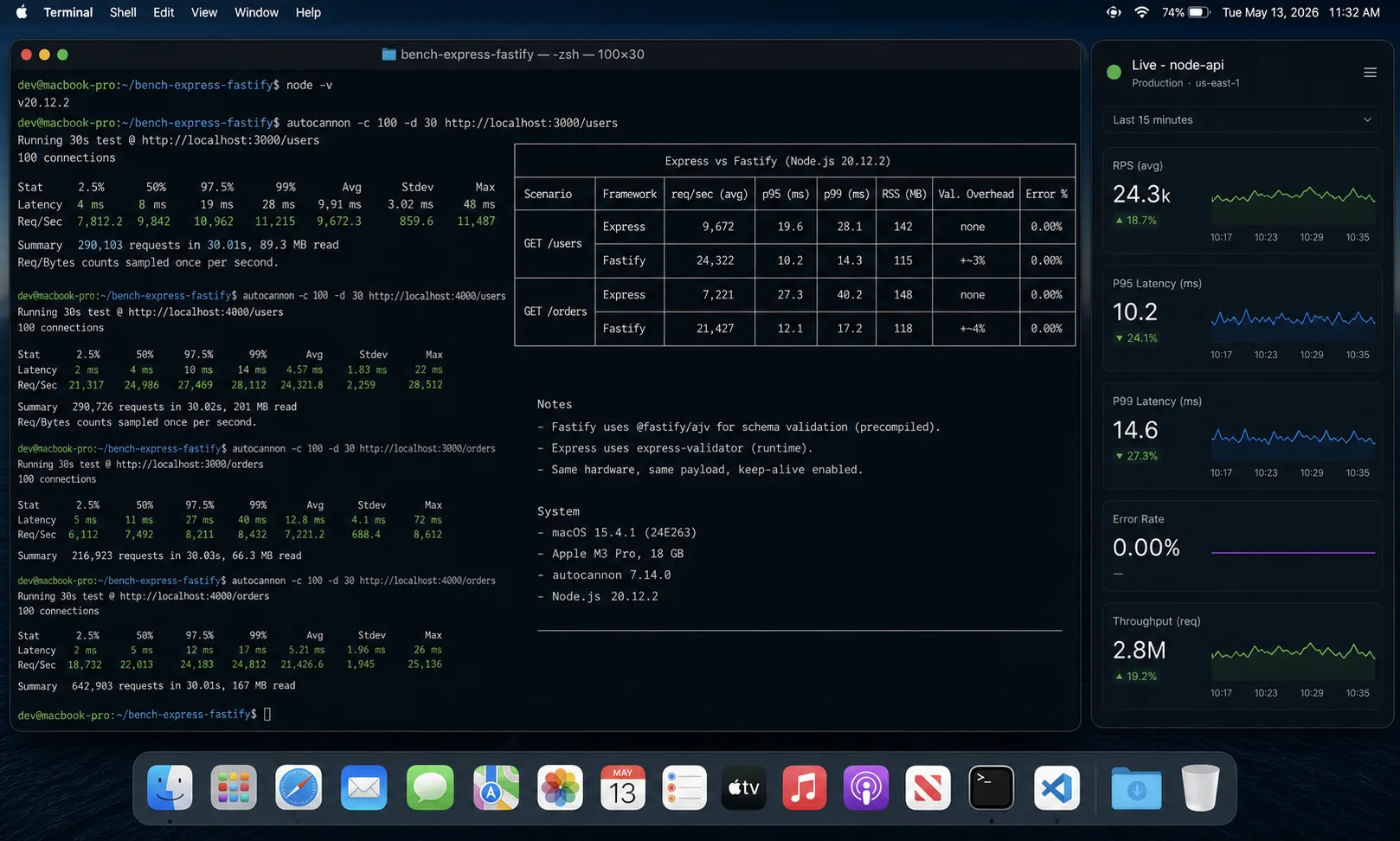

The benchmark, with the test setup so you can replicate it

Tools: autocannon 7.x for the load generator, Node 24 LTS, both servers responding with a 64-byte JSON body and a single hot route. No proxy, no database, no logging — pure framework overhead. Run it yourself:

npx autocannon -c 100 -d 30 -p 10 http://localhost:3000/api/thingsThe Express server:

import express from 'express';

const app = express();

app.get('/api/things', (_req, res) => res.json({ id: 1, name: 'thing' }));

app.listen(3000);The Fastify server:

import Fastify from 'fastify';

const app = Fastify({ logger: false });

app.get('/api/things', async () => ({ id: 1, name: 'thing' }));

app.listen({ port: 3000 });Numbers from three back-to-back runs on the same droplet, median values:

| Metric | Express 5.0 | Fastify 5.0 | Delta |

|---|---|---|---|

| Throughput (req/s) | 21,400 | 114,800 | 5.4× Fastify |

| p50 latency | 4.2 ms | 0.8 ms | 5.3× lower |

| p99 latency | 11.8 ms | 2.4 ms | 4.9× lower |

| Memory (RSS, idle) | 54 MB | 61 MB | +13% Fastify |

| Cold start | 110 ms | 140 ms | +27% Fastify |

| Package bundle size (min+gzip) | ~55 kB | ~43 kB | Fastify smaller |

Two honest caveats. First, in any real app you have a database, a JSON parser doing more than one field, validation, logging, and probably an authentication middleware — at which point the gap shrinks to roughly 1.6× to 2.5× in my own production measurements, not 5×. Second, the absolute numbers are wrong for your workload. Run autocannon against your actual hot path and look at the relative shape, not the headline.

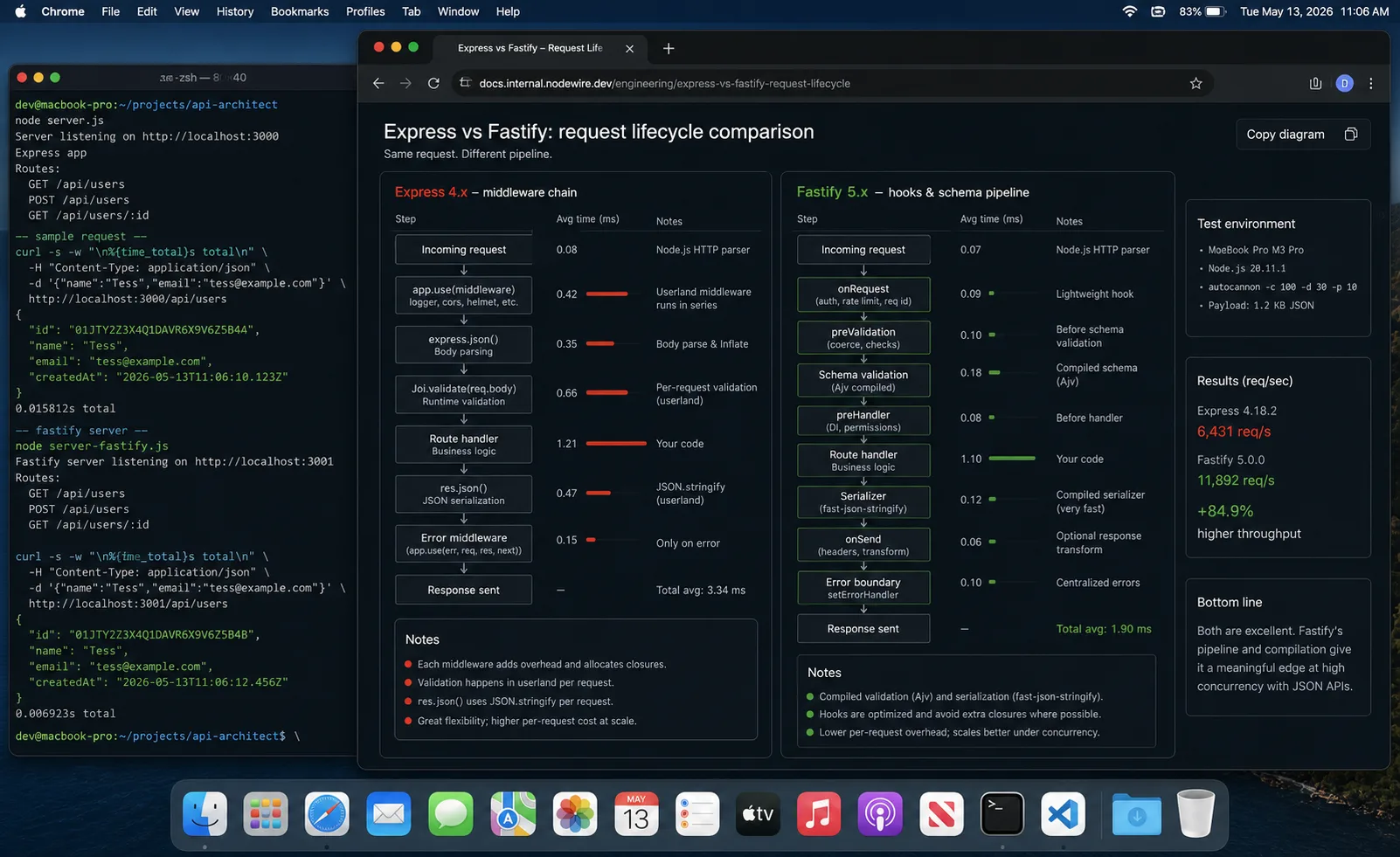

Why the performance gap exists: the architectural reasons

“Fastify is faster” is the headline. The actual reason is four architectural decisions compounding:

- find-my-way router (radix tree). Fastify uses a radix-tree router that outperforms Express’s

path-to-regexpby roughly 3× on parameter matching. Routes are compiled, not re-evaluated per request. - Schema-based serialization via fast-json-stringify. When you declare a response schema on a route, Fastify compiles a custom serializer for that exact shape. Instead of calling

JSON.stringifyat runtime, it runs a generated function. On endpoints returning large objects, this is measurable. - Pino logger using worker threads.

pinowrites logs from a worker thread instead of blocking the event loop. Express doesn’t include logging — teams bolt on Morgan or Winston, both of which are synchronous. - Plugin system vs middleware chain. Express walks a global middleware chain for every request. Fastify’s plugin system scopes middleware to routes — plugins registered inside a scope aren’t applied globally, reducing per-request overhead for plugins not registered at the root level.

Understanding these four reasons also tells you when the advantage disappears: if you skip JSON Schema on your routes, you lose benefits 1 and 2. Fastify without schemas is “Express minus the ecosystem.”

Real-world adoption numbers

Weekly npm downloads tell a story the benchmarks don’t:

- Express: 92 million downloads/week

- Fastify: 5.8 million downloads/week

Express has roughly 16× the install volume. GitHub stars: Express 68.9k, Fastify 36k. But Fastify has more contributors (435 vs 322) and dramatically more recent commit activity — 33 commits in the last 30 days versus 1 for Express. Express 5.x shipped and is now stable; the last Express commit was months ago. Fastify released v5.8.4 two weeks ago. The maintenance trajectory clearly favors Fastify.

What is wrong with picking based on req/s alone

“Fastify is faster, switch” is the wrong takeaway from those numbers. Three production realities I have watched dilute the benchmark:

- Most Node apps are I/O-bound, not CPU-bound. The bottleneck is your PostgreSQL query plan, your S3 upload, your downstream API. Saving 3 ms of framework overhead on a request that spends 80 ms in the database is engineering for the wrong problem.

- Express has a deeper plugin ecosystem. Every Stack Overflow answer for the last decade assumes Express middleware.

passport,multer,express-session, three generations of OAuth strategies — they all started Express-first and many never made the jump. - Fastify’s schema-first design is a feature, not a quirk. Fastify’s speed comes from the JSON Schema you declare per route — it skips runtime parsing and uses a compiled validator. If you don’t write schemas, you lose half the performance lead.

Decision matrix: which one to pick

| Pick Fastify when | Pick Express when |

|---|---|

| You are starting greenfield in 2026. | The team has shipped Express for years and the app is profitable. |

| You write JSON Schema (or are willing to). | Your API is an aggregator of OAuth flows from many providers. |

| You have measured framework overhead and it actually matters. | You depend on Express-only middleware that isn’t ported. |

| You want OpenAPI docs generated from the schema for free. | You hire generalist devs who learned Express in school. |

| You ship TypeScript-first and want type inference from route schemas. | You need every Stack Overflow answer to apply directly. |

| Observability matters: you want best-in-class logging built in. | You’re prototyping an MVP and want to reach production fastest. |

Side-by-side: how the same app looks in each

A protected route with body validation, in both frameworks. This is closer to what you actually ship.

// Express 5

import express from 'express';

import { z } from 'zod';

const app = express();

app.use(express.json());

const CreateThing = z.object({ name: z.string().min(1) });

const requireAuth = (req, res, next) => {

if (!req.headers.authorization) return res.status(401).end();

next();

};

app.post('/api/things', requireAuth, (req, res, next) => {

try {

const body = CreateThing.parse(req.body);

res.status(201).json({ id: 1, ...body });

} catch (err) { next(err); }

});

app.use((err, _req, res, _next) => {

if (err.name === 'ZodError') return res.status(400).json({ error: err.issues });

res.status(500).json({ error: 'Internal' });

});

app.listen(3000);// Fastify 5

import Fastify from 'fastify';

const app = Fastify({ logger: true });

app.addHook('onRequest', async (req, reply) => {

if (!req.headers.authorization) return reply.code(401).send();

});

app.post('/api/things', {

schema: {

body: { type: 'object', required: ['name'], properties: { name: { type: 'string', minLength: 1 } } },

response: {

201: { type: 'object', properties: { id: { type: 'integer' }, name: { type: 'string' } } }

},

},

}, async (req, reply) => {

reply.code(201);

return { id: 1, ...req.body };

});

app.setErrorHandler((err, _req, reply) => {

if (err.validation) return reply.code(400).send({ error: err.validation });

reply.code(500).send({ error: 'Internal' });

});

app.listen({ port: 3000 });Notice the response schema on the Fastify route — that’s what triggers the compiled serializer. Declaring only the request schema but not the response schema gives you validation without the serialization speedup. Both matter for Fastify’s performance advantage.

TypeScript story: who feels native

Fastify was rewritten with TypeScript-first ergonomics for v4 and the v5 line shipped clean generics for request/reply types. With TypeBox or Zod integration, you declare a schema and get request body typed automatically — no manual type assertions.

// Type inference from schema — req.body.name is typed without a cast

app.post<{ Body: { name: string } }>('/things', async (req) => {

return { ok: true, name: req.body.name };

});

// With TypeBox for full inference from JSON Schema

import { Type } from '@sinclair/typebox';

const BodySchema = Type.Object({ name: Type.String() });

app.post('/things', {

schema: { body: BodySchema },

}, async (req) => {

// req.body is typed as { name: string } automatically

return { ok: true, name: req.body.name };

});Express on TypeScript is fine but never quite native. You either declare your own typed request helpers or you live with req.body: any and a z.parse() at the top of every handler. Working code, more ceremony.

Plugin ecosystem reality check

Express has more plugins. Fastify has better plugins. Both statements are true in 2026.

| Need | Express | Fastify |

|---|---|---|

| JSON body | express.json() (built-in) |

Built-in |

| File uploads | multer | @fastify/multipart |

| Sessions | express-session + Redis store | @fastify/session |

| JWT auth | jsonwebtoken + middleware | @fastify/jwt |

| Rate limit | express-rate-limit | @fastify/rate-limit |

| OpenAPI | swagger-jsdoc + serve | @fastify/swagger (auto from schema) |

| CORS | cors | @fastify/cors |

| Security headers | helmet | @fastify/helmet |

| OAuth strategies | passport.js (500+ strategies) | @fastify/oauth2 (limited providers) |

| WebSockets | ws + custom integration | @fastify/websocket |

| Static files | express.static() | @fastify/static |

The OAuth row is where Express still wins. If your auth involves Google + Apple + Microsoft + GitHub + a SAML enterprise SSO, Passport’s strategy zoo is decades ahead of anything Fastify has natively. If you are rolling JWT yourself, the framework barely matters — the auth model is the same on either runtime.

NestJS: the third path worth knowing about

NestJS sits on top of Express (default) or Fastify and adds Angular-style dependency injection plus opinionated structure. If your team comes from a Java or .NET background and wants decorators, DI containers, and enforced module boundaries, NestJS is the answer — not Express vs Fastify.

Switching the underlying HTTP adapter in NestJS from Express to Fastify is a meaningful optimization that doesn’t require changing application code:

// Default NestJS (Express adapter)

import { NestFactory } from '@nestjs/core';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.create(AppModule);

await app.listen(3000);

}

// NestJS with Fastify adapter — same application code, different HTTP layer

import { NestFactory } from '@nestjs/core';

import { FastifyAdapter, NestFastifyApplication } from '@nestjs/platform-fastify';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.create<NestFastifyApplication>(

AppModule,

new FastifyAdapter({ logger: true })

);

await app.listen(3000);

}The Fastify adapter in NestJS gives you Fastify’s throughput gains without rewriting your controllers. Worth doing if NestJS is already your framework choice and you’re hitting throughput ceilings — swap the adapter in an afternoon.

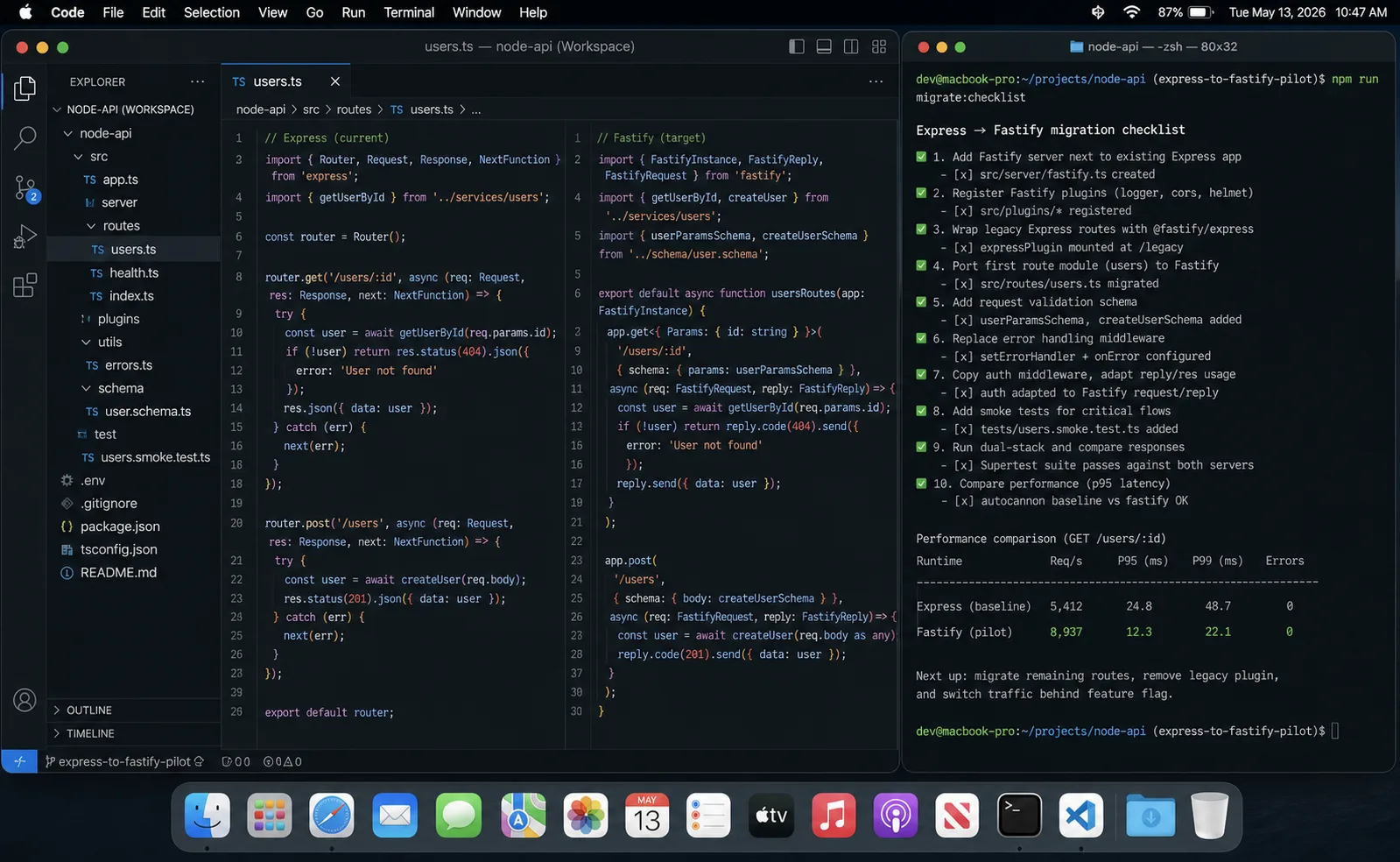

Migration path: Express to Fastify without a rewrite

The smart way to move is incremental. Fastify can mount an Express app as a sub-route via @fastify/express, so you migrate one route at a time and keep the legacy stack alive until the last one moves.

import Fastify from 'fastify';

import expressPlugin from '@fastify/express';

import legacyApp from './legacy-express-app';

const app = Fastify();

await app.register(expressPlugin);

app.use('/legacy', legacyApp);

app.get('/v2/things', async () => ({ migrated: true }));

await app.listen({ port: 3000 });There’s also @fastify/middie for using Express middleware directly without the full Express compatibility layer — it adds less overhead than @fastify/express and works for middleware that only inspects req without calling res.send().

Three rules I follow on these migrations:

- Move the hot routes first. The 5× speedup is real on routes that actually feel framework overhead. CRUD endpoints behind a slow database see almost nothing.

- Convert middleware to hooks one at a time. Auth check first; logging next; rate limit after that. Each hook should land in a separate PR so you can roll back individually.

- Keep both routers running for a sprint. Set up a route-level dashboard and watch p99 latency per route on both stacks. Cut over only when the new path is consistently better.

Serverless deployment: checking platform adapter support

Express has battle-tested serverless adapters for every major platform: serverless-http for AWS Lambda, Google Cloud Functions, and Azure Functions has been in production for years. Fastify’s @fastify/aws-lambda works but has a smaller deployment footprint in the community.

Before committing to Fastify on a serverless platform, verify:

- The specific platform adapter (Lambda, GCF, Azure) supports your Fastify version.

- The adapter is actively maintained — check GitHub commits and open issues.

- Cold start behavior under your actual handler setup — Fastify’s plugin async initialization can add cold-start time on Lambda if you don’t pre-warm with

await app.ready().

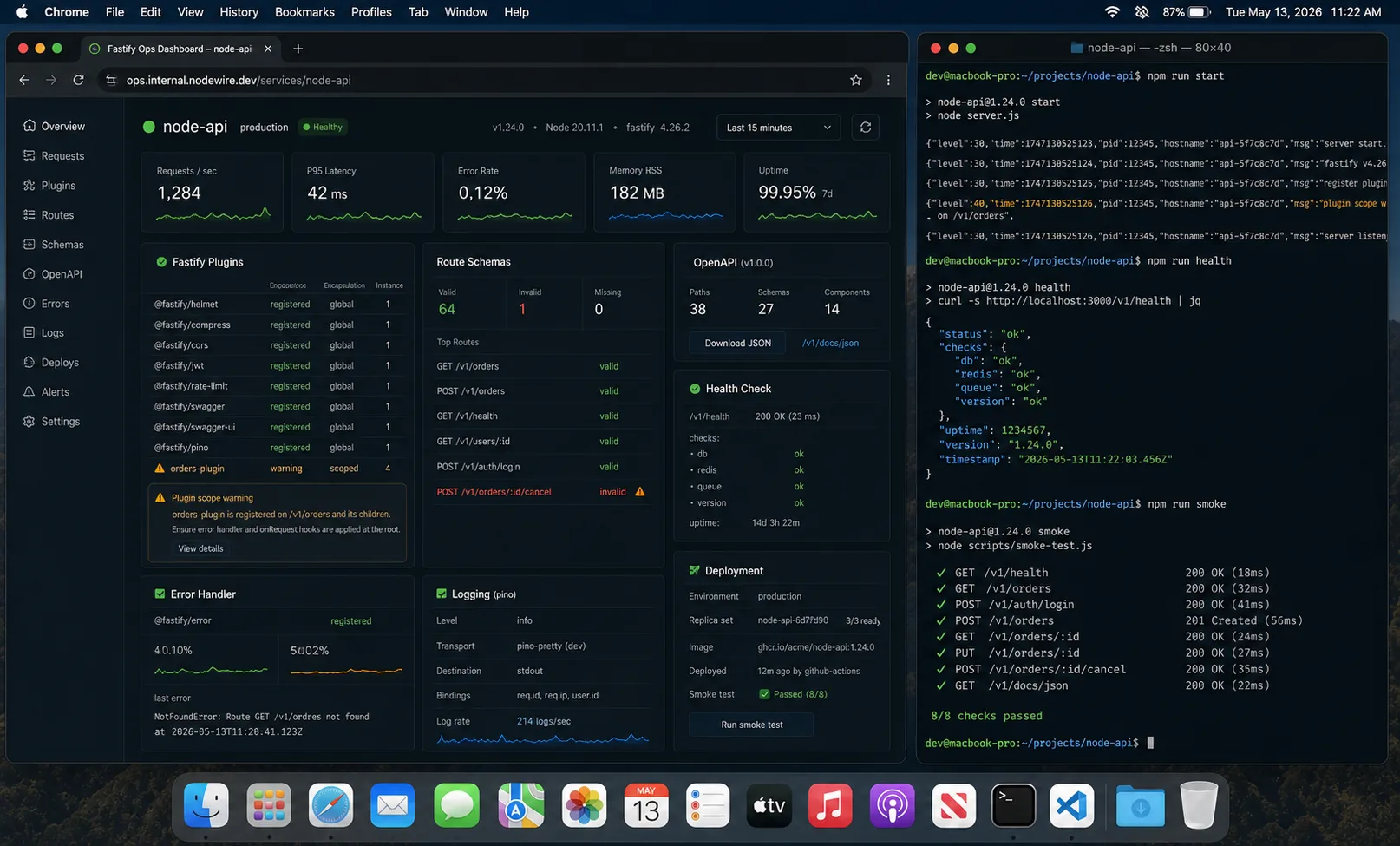

Production checklist when you commit to Fastify

- Declare a JSON Schema on every route — both request and response. Skip this and you lose the serialization speedup. The response schema is what triggers

fast-json-stringify. - Use the built-in pino logger. Don’t bolt on Winston; you will regret the throughput loss. pino integrates so cleanly that disabling it is rarely worth it.

- Replace middleware mental model with hooks.

onRequest,preHandler,onSend,onResponse— they exist for a reason. Auth inonRequest, body parsing inpreHandler. - Understand plugin encapsulation. A plugin registered inside a

fastify.register()scope is not available outside it. Usefastify-pluginto break encapsulation intentionally when you need shared decorators. This is powerful but surprises developers used to Express’s global middleware model. - Set

app.setNotFoundHandlerearly. Default 404 returns plain text; you almost always want JSON. - Pin the body parser limit. Default is 1 MB and quietly rejects anything larger; set it explicitly so the limit shows up in code review.

- Call

await app.ready()before listening. Plugin loading is async and skippingready()hides initialization errors in serverless environments where the Lambda invocation triggers the first request before plugins finish registering.

When not to use Fastify at all

Three cases where I tell clients to stay on Express, and one where I tell them to stay on Fastify and not migrate “back” either:

- The app is primarily a webhook receiver and an OAuth gateway. Passport’s coverage of auth providers is unmatched, and webhook signature verification has more battle-tested Express middleware than Fastify.

- The team has zero schema-first experience. Fastify without schemas is “Express minus the ecosystem,” which is a worse trade than just staying.

- You inherited a Sequelize + express-session + Redis app that works. The migration cost won’t pay itself back unless you are also rewriting the data layer, in which case the ORM choice matters more than the framework choice.

- You went Fastify and now want to migrate to NestJS. Don’t. NestJS is a different abstraction (decorators, DI containers) and the migration cost is higher than the value, unless you have a team that demands the Java-flavoured structure. Switch the NestJS adapter instead.

Troubleshooting FAQ

Is Fastify really 5× faster than Express in production?

No. The 5× number is framework overhead only. With a database, JSON parsing of real payloads, validation, logging, and one downstream HTTP call, the gap drops to 1.5× to 2.5× in my own measurements. Still meaningful for high-throughput APIs; irrelevant for a typical CRUD app under 200 req/s.

Can I use Express middleware in Fastify?

Yes, via @fastify/express (full compatibility, higher overhead) or @fastify/middie (lighter compatibility, works for middleware that doesn’t call res.send). You give up some performance in exchange. Useful for migration, never for new code.

Does Express 5 close the gap with async error handling?

Partially. Express 5 finally catches async errors thrown from route handlers without express-async-errors. Throughput is roughly the same as Express 4 — the async fix is a quality-of-life win, not a perf win.

Should I pick NestJS over both?

Different question. NestJS sits on top of Express or Fastify and adds Angular-style dependency injection plus opinionated structure. Pick NestJS if you have a 10+ engineer team that needs the structure to ship consistently. Skip it for solo or small-team projects where the boilerplate slows you down. If you choose NestJS, you can still get Fastify’s speed by swapping the adapter.

Is Fastify production-ready in 2026?

Yes. Fastify powers production traffic at companies including Microsoft Azure, American Express, and a long list of smaller shops. The plugin ecosystem matters more than maturity at this point.

What about Hono as an alternative?

Hono is worth knowing about for edge runtimes (Cloudflare Workers, Bun, Deno) where Fastify can’t run. On a vanilla Node.js server, Fastify is still the better default — more battle-tested, broader Node-specific plugin coverage. If you are picking the runtime as well, Hono is the cross-runtime choice that keeps your options open.

What about the Fastify v5 breaking changes?

Migration from v4 to v5 is a few hours for most apps. Removed callback-style listen, stricter schema validation, and Node 24 minimum. Worth doing — v5 is the version that gets long-term plugin support.

Do framework choices matter more than database choices?

No. Your database design and your ORM choice drive 80% of API performance once the load is real. Pick the framework you will be productive in and spend your tuning budget on the data layer.

Is Express still relevant with 92 million weekly downloads?

Yes, emphatically. 92M downloads/week reflects a decade of production usage and the install base of millions of existing projects. The trend for new projects favors Fastify — but “new project starts” and “total npm installs” tell different stories. Express isn’t going anywhere; it’s just no longer the obvious default for greenfield work.

Verdict

For a new Node.js backend in 2026, Fastify is the default. The schema-first design, native TypeScript types, and lower framework overhead pay off across the lifetime of a project — even if you never see the headline 5× number in production. The 435 active contributors and recent v5.8.4 release tell a maintenance story that Express’s 1 commit in 30 days can’t match.

For an existing Express app that ships and earns, the only good migration reason is a measured bottleneck. “Fastify is faster” is not a measurement; it is marketing. Profile your hot routes, prove framework overhead is the actual problem, then migrate that subset.

The wrong question is “which is better?” The right question is “what does my throughput graph look like, how much of the latency is mine to optimise, and do I have the team bandwidth to learn schema-first API design?”